What is Reselected Key Photo Restoration in Live Photos ?

A sub-category of RefSR and Departs from conventional paradigms

RefISR

External Reference Database

RefVSR

Full Video Restoration

SISR

No Reference

Ours

In-sequence Reference with Content Consistency

Abstract

Live Photo captures both a high-quality key photo and a short video clip to preserve the precious dynamics around the captured moment. While users may choose alternative frames as the key photo to capture better expressions or timing, these frames often exhibit noticeable quality degradation, as the photo capture ISP pipeline delivers significantly higher image quality than the video pipeline. This quality gap highlights the need for dedicated restoration techniques to enhance the reselected key photo. To this end, we propose LiveMoments, a reference-guided image restoration framework tailored for the reselected key photo in Live Photos. Our method employs a two-branch neural network: a reference branch that extracts structural and textural information from the original high-quality key photo, and a main branch that restores the reselected frame using the guidance provided by the reference branch. Furthermore, we introduce a unified Motion Alignment module that incorporates motion guidance for spatial alignment at both the latent and image levels. Experiments on real and synthetic Live Photos demonstrate that LiveMoments significantly improves perceptual quality and fidelity over existing solutions, especially in scenes with fast motion or complex structures.

Method

Overall Pipeline

Our setting is characterized by temporal dependency and reference-target correlation, while effective restoration of the reselected key photo requires leveraging fine-grained details from the reference frame. To achieve this, the proposed LiveMoments consists of three key components: a RestorationNet performs conditional denoising on the noisy latent of ; a ReferenceNet encodes the original key photo to provide high-quality guidance; a unified Motion Alignment module achieves fine-grained alignment at both the latent and image levels.

Latent Space Motion Alignment

Motion blur and subject displacement in the reselected frame make feature fusion with the reference unreliable. To address this, we design a motion-guided attention that introduces explicit motion guidance into the latent space. We encode the estimated optical flow into motion embeddings and incorporate them into the cross-attention mechanism as an additive bias to the reference key features:

This enables the query to attend to aligned regions, improving restoration under misaligned scenarios.

Image Space Motion Alignment

Live Photos typically have ultra-high resolutions, which require patch-wise inference through a tiling strategy and subject motion often causes pixel-level misalignment between patches. Therefore, we propose a Patch Correspondence Retrieval (PCR) strategy to retrieve spatially aligned reference patches.

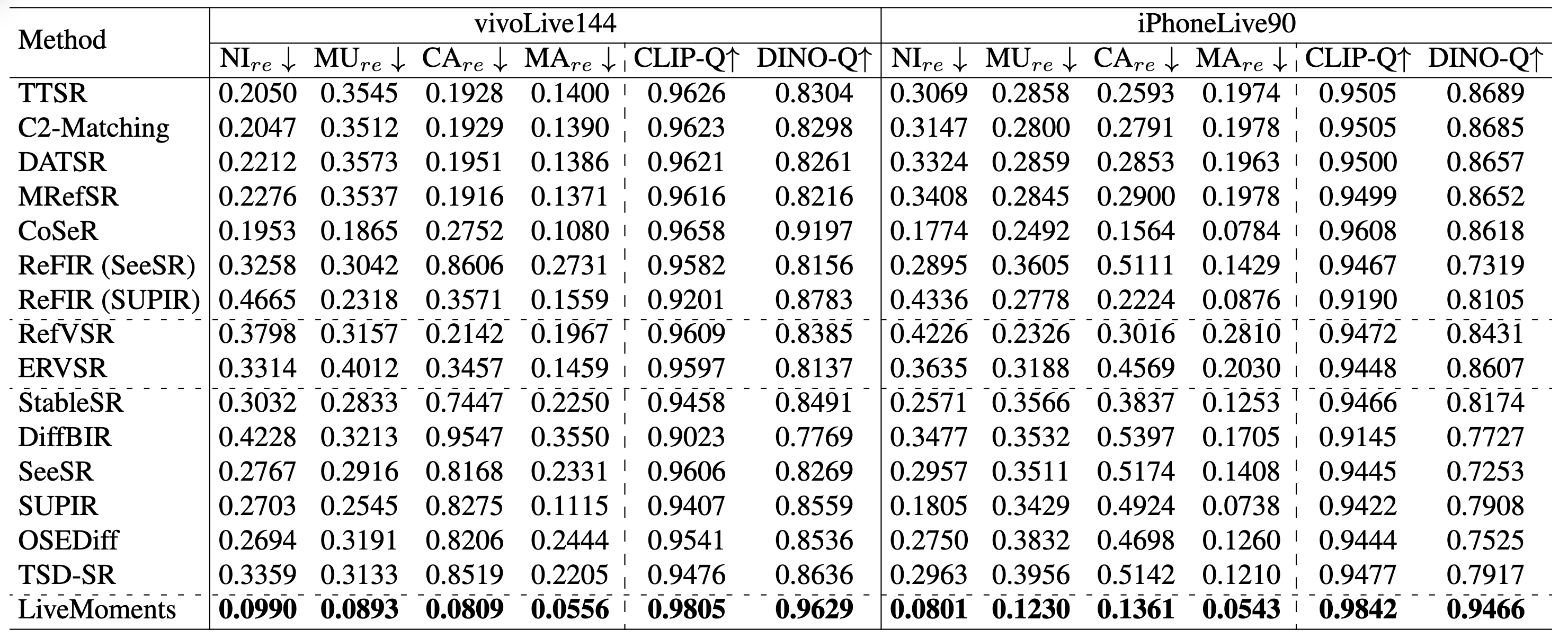

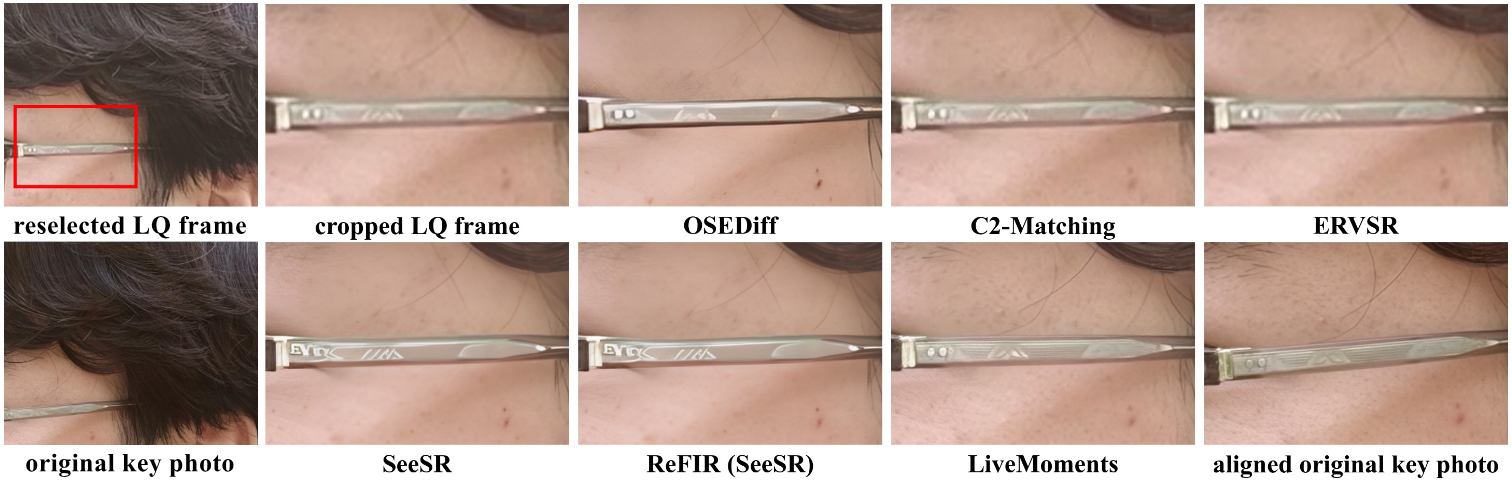

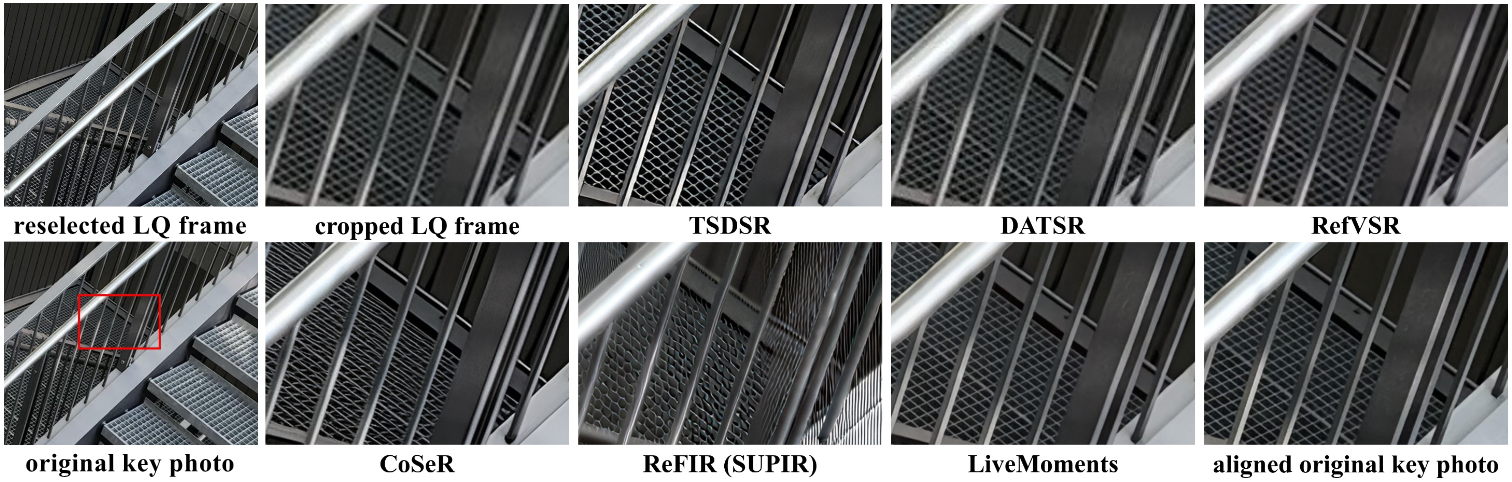

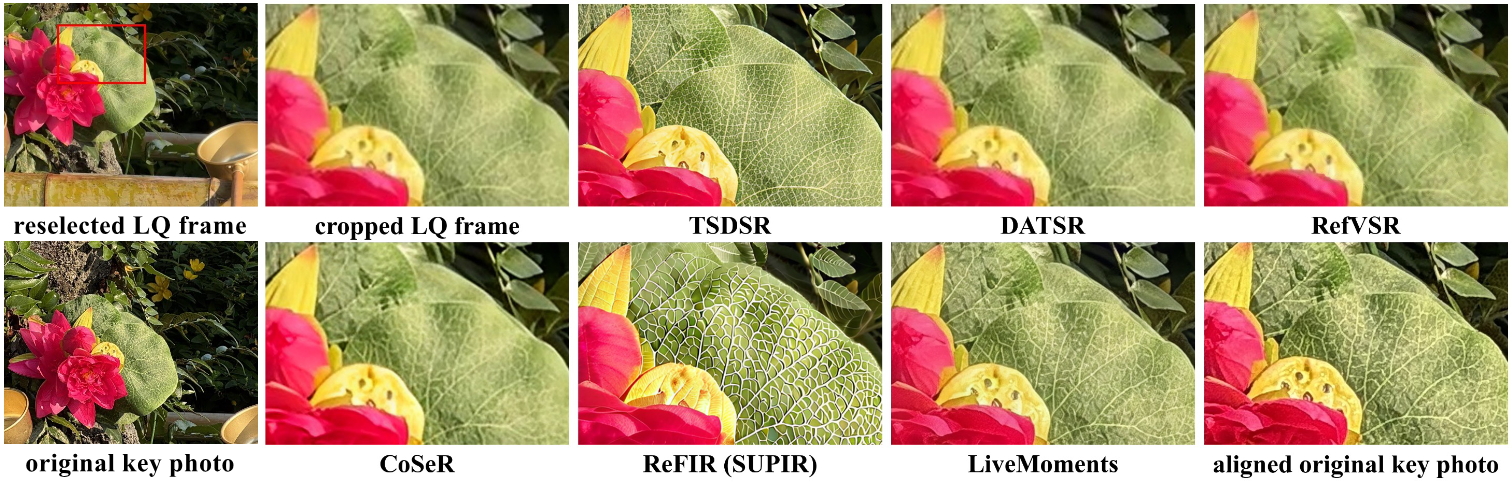

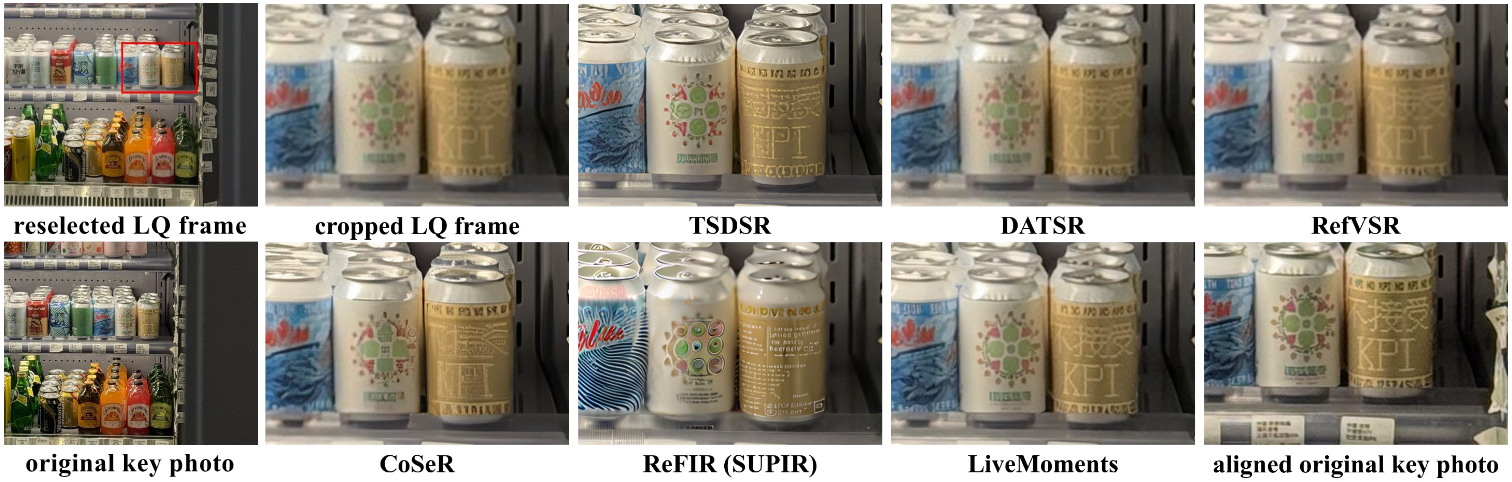

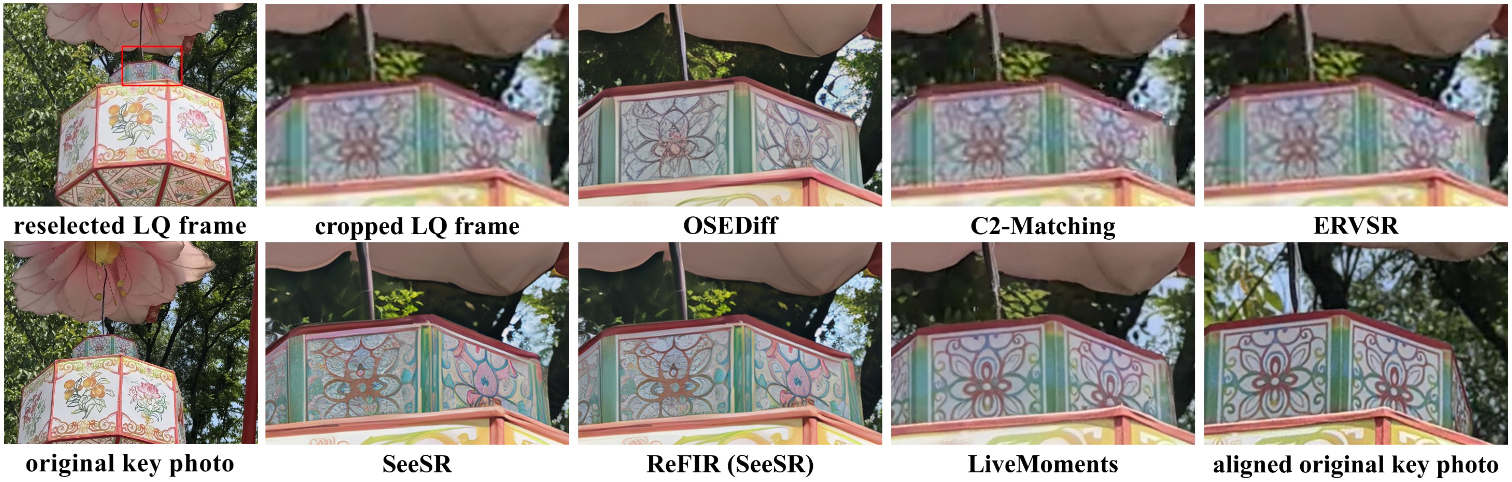

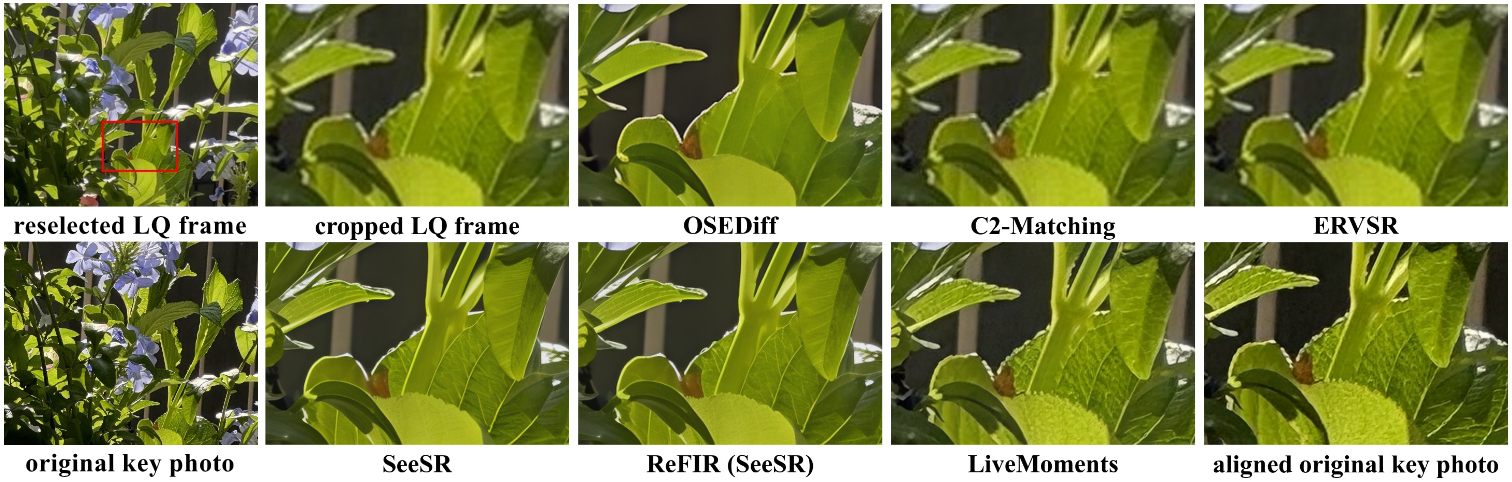

Results on Real-World Live Photos

We evaluate on two real-world Live Photo datasets (vivoLive144 and iPhoneLive90) captured across diverse scenes, along with a synthetic dataset. To better assess perceptual quality, we adapt no-reference metrics to our reference-based setting.

Quantitative Results

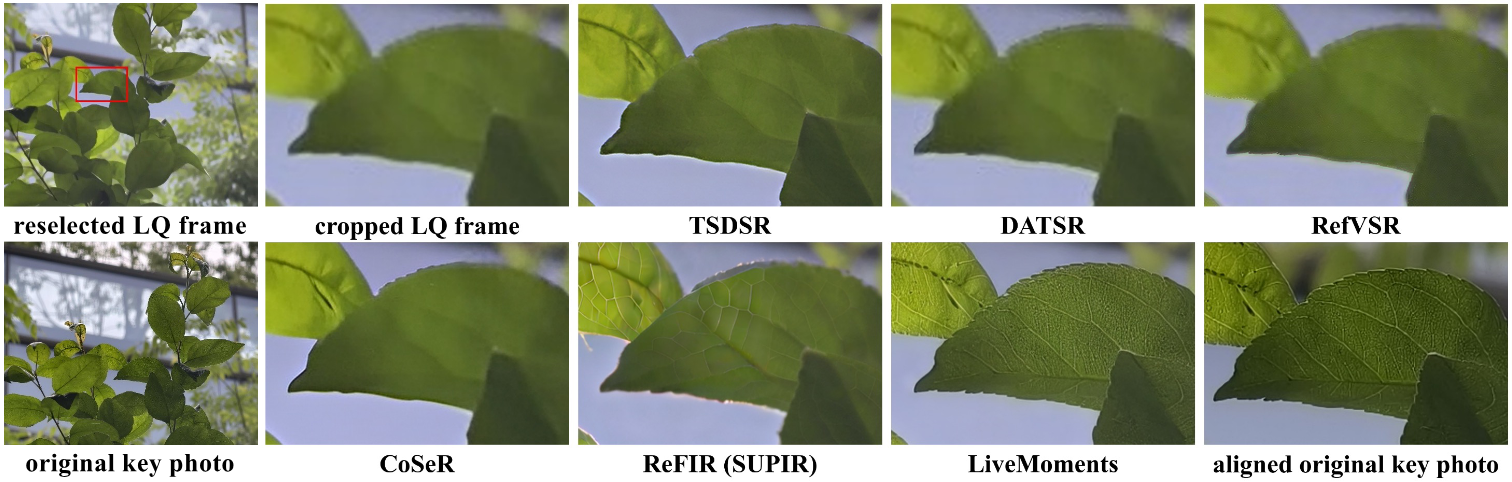

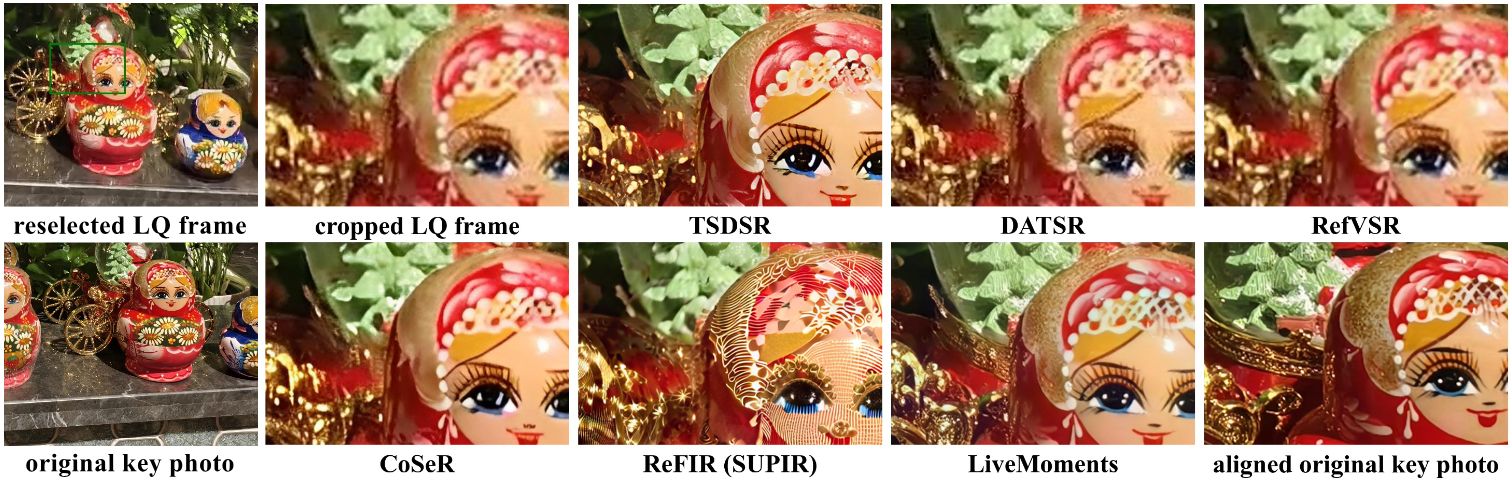

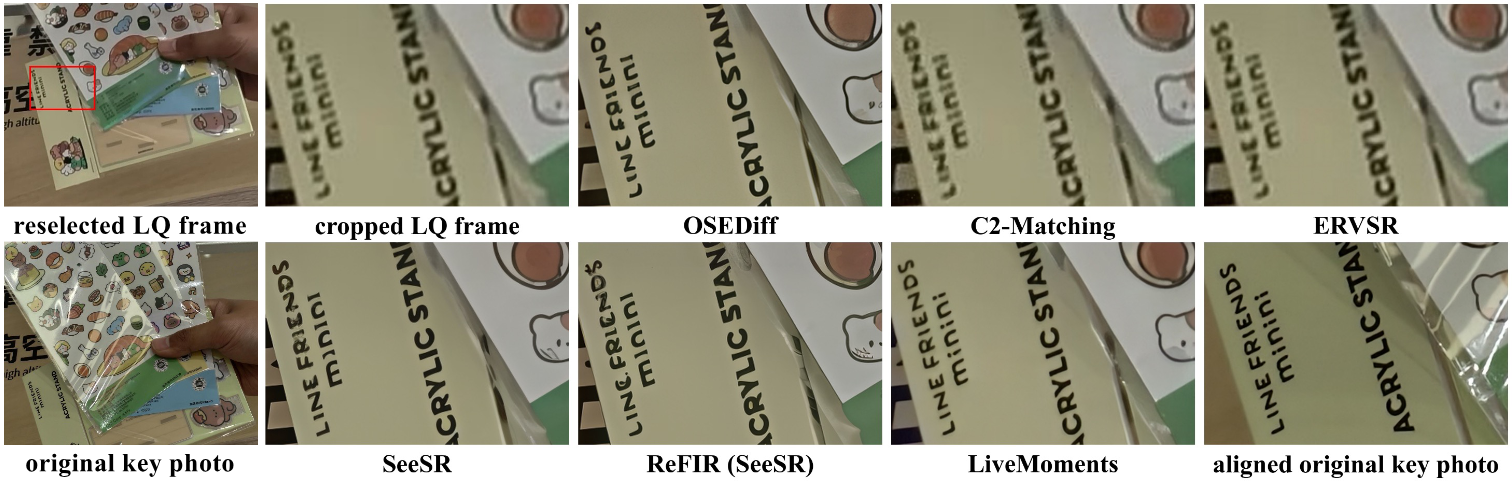

Visual Results

• vivoLive144 (captured with vivo X200 Pro)

• iPhoneLive90 (captured with iPhone 15 Pro)

BibTeX

@inproceedings{xue2026livemoments, title={LiveMoments: Reselected Key Photo Restoration in Live Photos via Reference-guided Diffusion}, author={Clara Xue and Zizheng Yan and Zhenning Shi and Yuhang Yu and Jingyu Zhuang and Qi Zhang and Jinwei Chen and Qingnan Fan}, booktitle={The Fourteenth International Conference on Learning Representations}, year={2026}, url={https://openreview.net/forum?id=02mgFnnfqG}}